Tracking

The goal is to locate moving objects in the environment and to track them. This has obvious uses in security and process monitoring. Additionally, it is an important skill in developmental robotics. Often, the objects that are most interesting in the environment are those that are moving. These are the objects that are most likely to eat you, or, in a more contemporary environment, to run you over.

Consider the following video (1.6 MB). We would like to be able to track these individuals as they run around the room. Notice that the individuals are not wearing clothing that is distinctive in any way (thus making tracking easier) and that they are in a cluttered, natural setting.

Step (1), Background Subtraction. The video consists of 279 frames, and each frame is of size 240x320x3. For each pixel and for each of R, G, and B, the mode was calculated across all 279 frames. The result was an image of the background with all of the moving objects (people) removed. (If the background were slowly changing over time, then a moving average (or moving mode) would have to be computed instead.) It is surprising that this works so well, note that at no time was the unoccluded background in the video. The mode image is shown here.

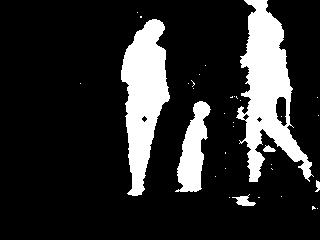

Step (2), Identifying Dynamic Pixels. For each frame, all of the pixels that did not match the mode image are marked. This is done by calculating the Euclidean distance between the R, G, and B values of each frame pixel and its corresponding mode pixel. A threshold is then used to determine which pixels are dynamic. An example in image form is shown, the white pixels correspond to dynamic pixels.

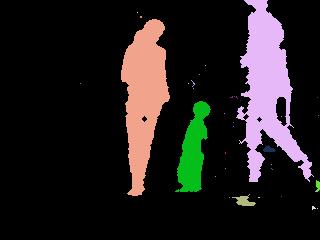

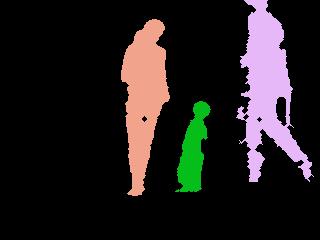

Step (3), Cluster the Dynamic Pixels. Agglomerative clustering is too slow to be used with image pixels. However, we can take advantage of the fact that the pixels that we want to cluster are at discrete locations. An algorithm similar to flood fill for coloring an area was created to do this task. Until each dynamic pixel has been assigned a cluster, the process loops by scanning a cursor over the image. For each loop, the cursor begins in the upper-left corner of the image, it then scans the image until it finds a first unassigned dynamic pixel. It then marks that pixel as being in cluster k and continues to scan. While it scans, any pixel within 2 pixels of a pixel in cluster k is then put into cluster k. When the cursor reaches the end of the image it scans again starting from the bottom. In the example image shown, each color represents a cluster.

Step (4), Take up to 5 Clusters. Take the 5 clusters with the greatest number of pixels and remove those clusters with fewer than 150 pixels.

Step (5), Tracking. Tracking was done as in [1]. Initially, each cluster is assigned a tracker. Once trackers are assigned, when the next frame is seen each tracker attempts to locate its cluster again. A tracker considers a cluster k2 to be a continuation of its current cluster k1 if the distance between k1 and k2 is below a threshold, and no other cluster is closer to k1 than k2. The distance between two clusters is the sum of the Euclidean distance of their centroids, and the absolute value of the difference between their radii, where the radius for a cluster is defined as the distance between its centroid and its farthest point. This tracking procedure is shown in this video (8.1 MB). Colors correspond to trackers. Every time the color changes this signifies that one tracker was lost and a new one was created. There are many obvious ways to improve the tracking such as using a color histogram for the tracked objects, and keeping trackers for more than one timestep until their original cluster is again found.

References

[1] Joseph Modayil and Benjamin Kuipers, "Bootstrap learning for object discovery",

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS-04),

742-747.